TL;DR

A mid-sized ecommerce company had its AWS bill swing between $150K and $250K a month with no clear explanation. We ran a 10-minute forensic audit using Deductive AI. The issue wasn’t inefficient resources. It was hidden architectural drift:

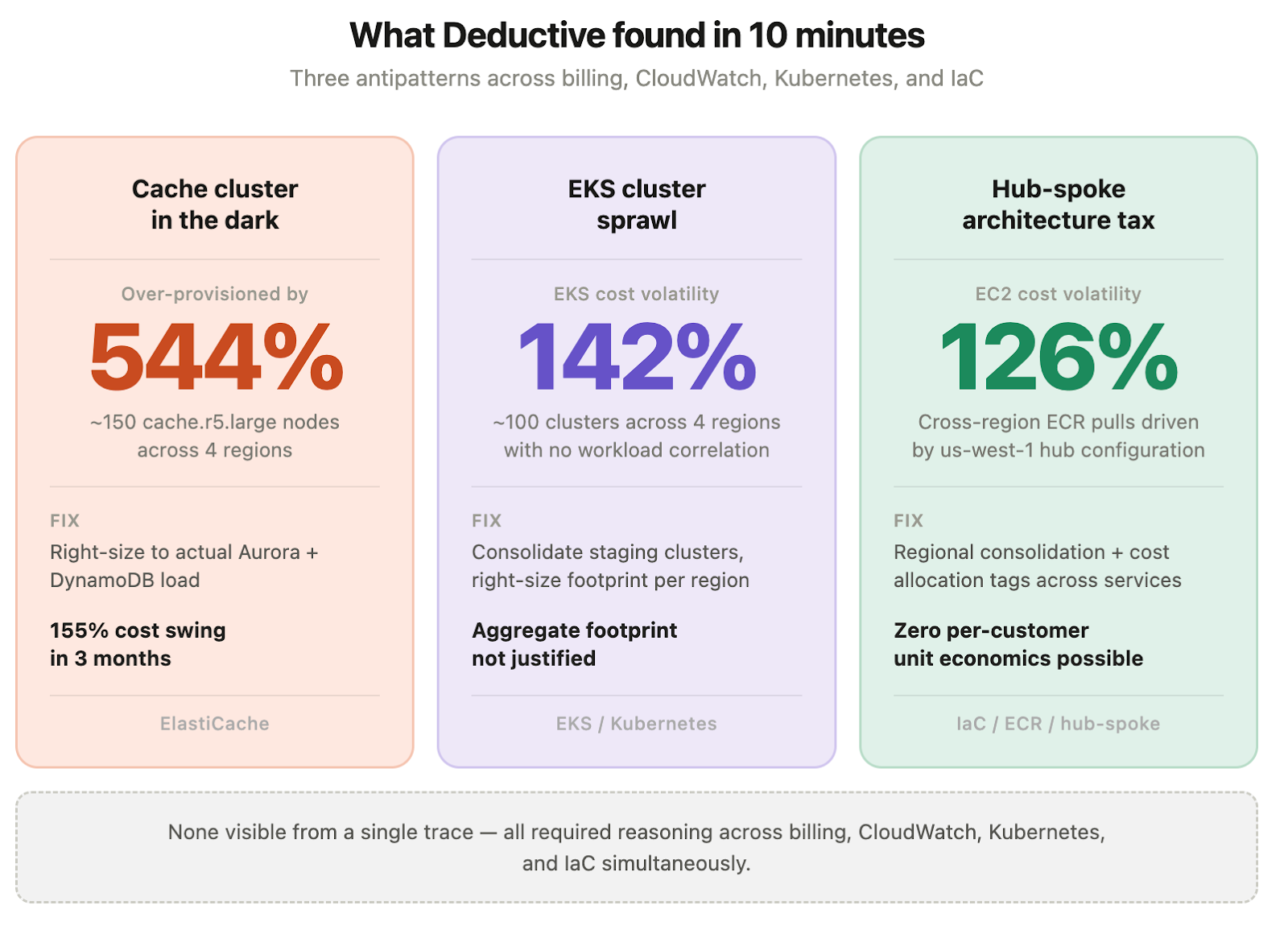

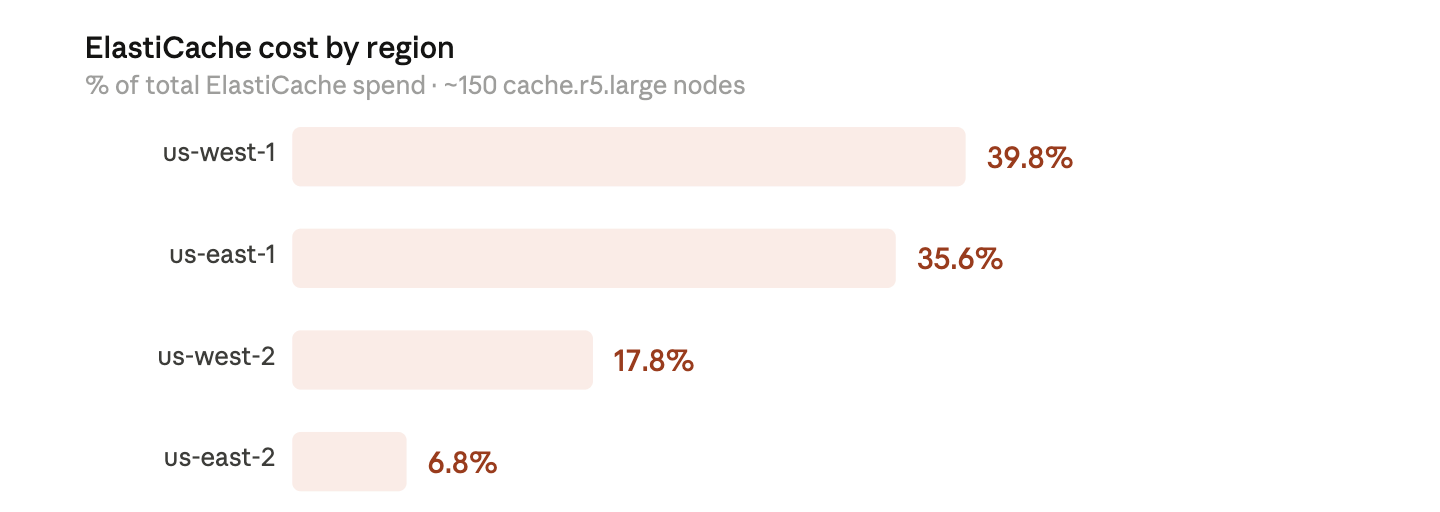

- Cache cluster drift. ElastiCache scaled to ~150

cache.r5.largenodes across four regions, 544% over-provisioned for the load. Aurora Serverless and DynamoDB were handling traffic. The cache was just idling, billing quietly. - Cross-region ECR tax. The container registry lived in a different region than the EKS clusters pulling from it. Every image pull crossed regions. This doesn’t surface in Cost Explorer. You only see it when you align registry location with cluster topology.

- Cluster sprawl baseline. ~100 EKS clusters across four regions. Each one carried worker nodes, load balancers, and networking overhead, including staging environments that didn’t need isolation. No single cluster looked expensive. The aggregate was.

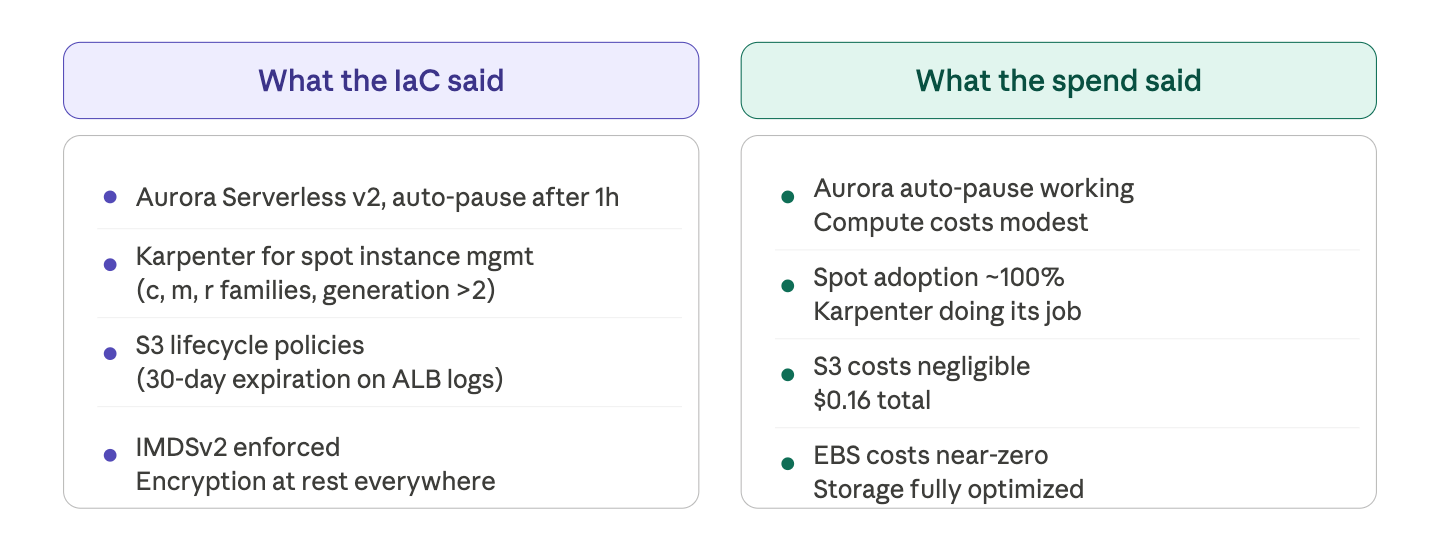

- The IaC paradox. Resource-level optimization was near-perfect. Spot usage high. Aurora auto-pause enabled. S3 negligible. Nothing looked wrong in isolation. The waste was structural.

Cloud forensics is a reasoning problem, not a data problem. The signals are already there. The gap is system-level inference.

The Setup: A Simple Question with a Complicated Answer

It started with a deceptively simple question from one of our mid-sized ecommerce customers: “How can Deductive AI help us reduce our cloud cost?”

Like most teams, they had a rough sense of spend, but no precise model of where or why it was changing. The footprint was non-trivial: ~100 Kubernetes clusters, up to 10,000 nodes at peak, 700 services across four regions, and a monthly bill swinging between $150K and $250K. Nothing obviously broken. No clear explanation.

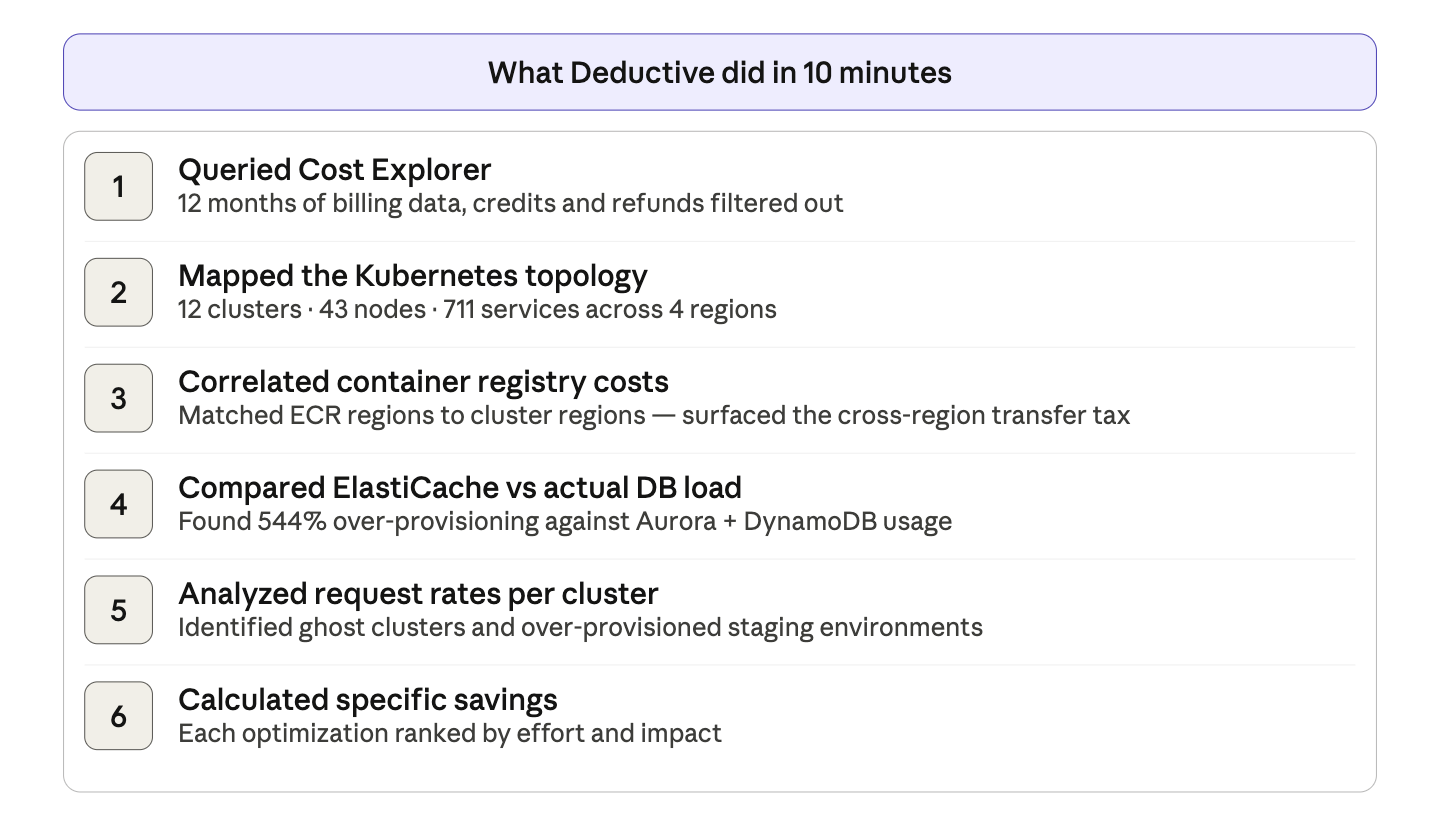

Instead of building another dashboard, we suggested a different approach: give Deductive access to AWS Cost Explorer via MCP and ask it to run a full forensic audit of their cloud spend. No pre-baked queries. No hypotheses. Just system-level reasoning over the raw signals.

What followed wasn’t faster analysis. It was a different class of analysis altogether.

The 10-Minute Audit That Replaced a Week of Work

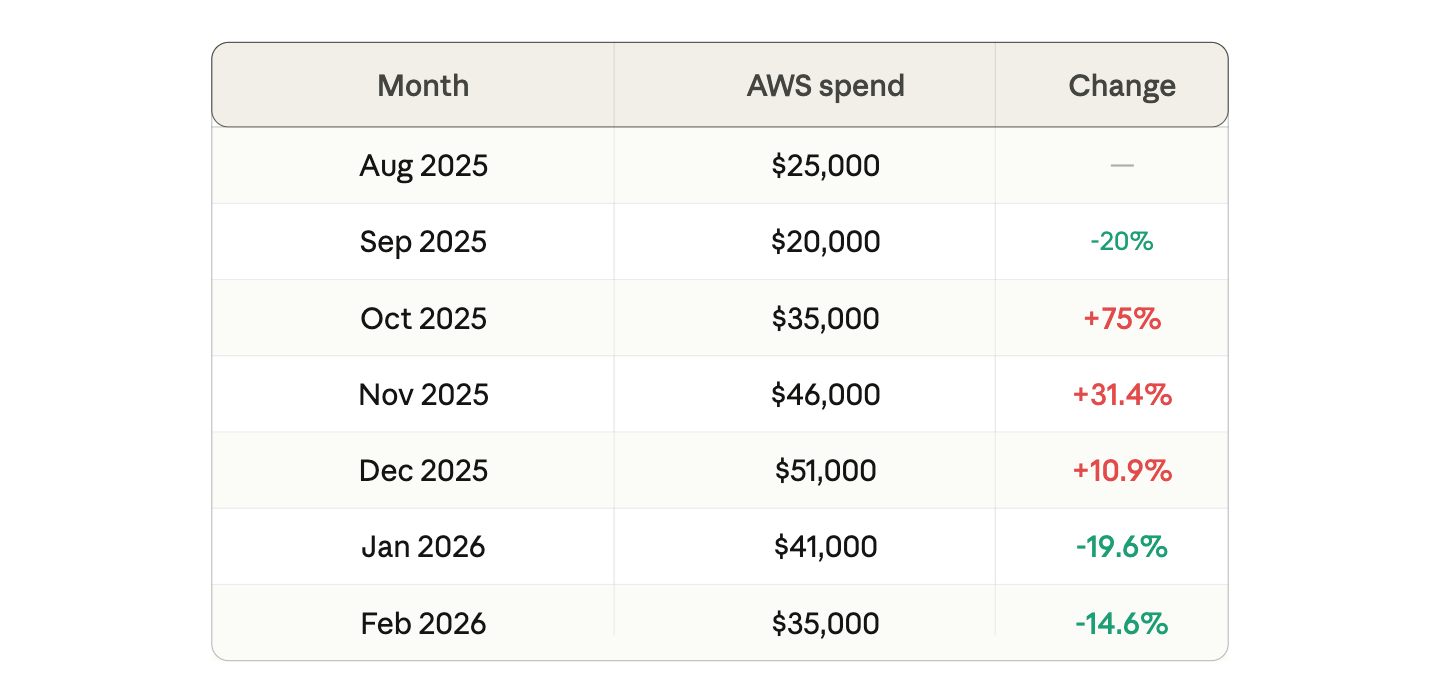

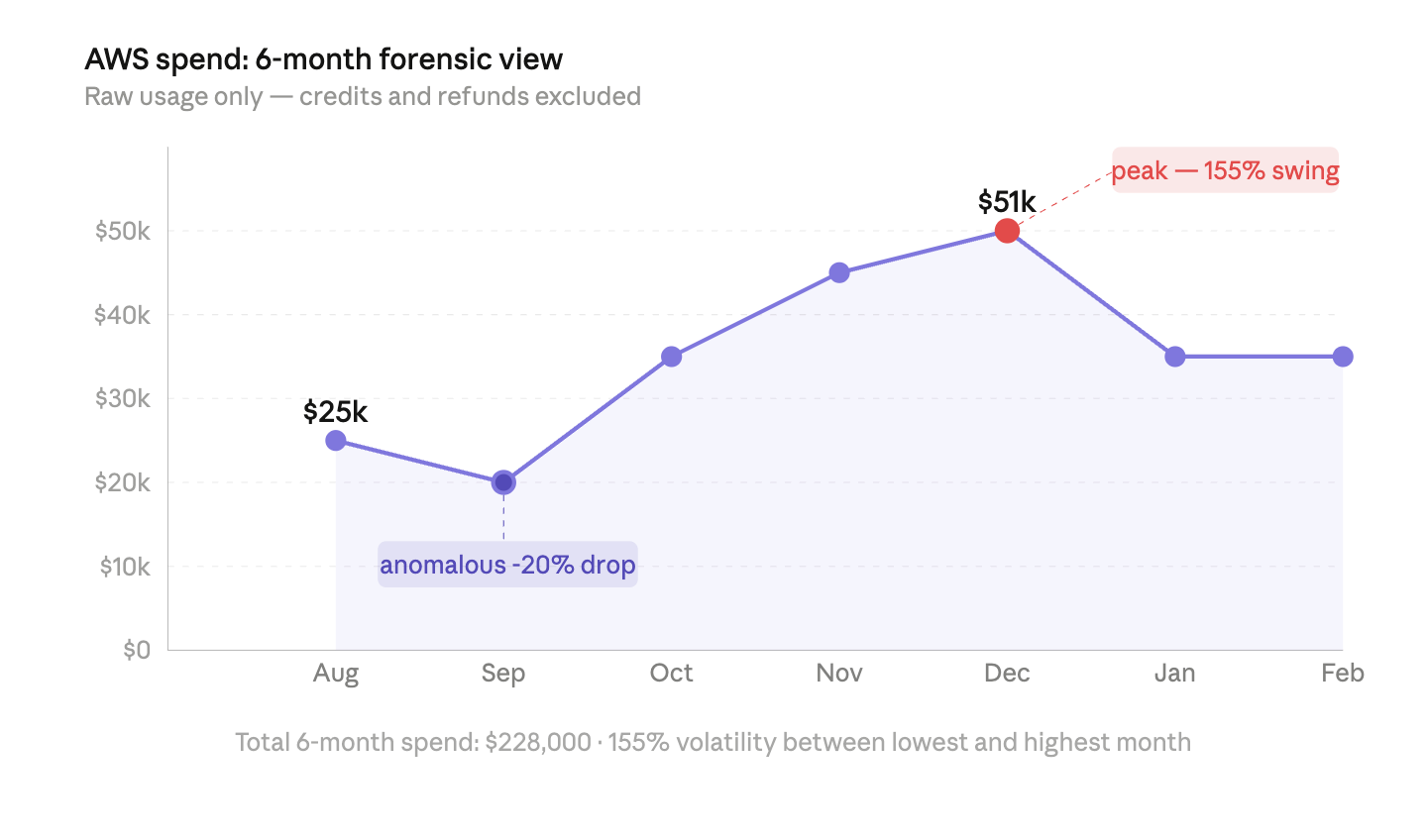

Within minutes, Deductive pulled our complete 12-month cost history, broke it down by service, region, and usage type, and surfaced the first surprise:

Total 6-month spend: $228,000 (excluding all credits and refunds — raw usage only).

A human analyst would look at this table and see "costs went up, then down." Deductive saw something more precise: a 155% cost volatility between the lowest and highest months, with a ~20% anomalous drop in September and January that warranted investigation.

But the real story wasn't in the totals. It was in the breakdown.

Anomalies Beneath the Surface

Traditional cost dashboards show you where your money goes. Deductive showed us where it shouldn't be going.

- ElastiCache volatility: 155% cost swing, a caching layer that was scaling unpredictably. Deductive flagged it as the highest-volatility service and tied it to regional deployment patterns.

- EKS extended support: 142% volatility, Kubernetes costs fluctuating without clear workload correlation. The AI identified inefficient scaling and recommended workload optimization.

- EC2 m6a.2xlarge (us-west-1): 126% volatility, an instance type costing more than its utilization justified.

Crucially, Deductive didn't stop at "ElastiCache is expensive." It correlated ElastiCache with ECR cross-region pulls, EKS cluster scaling, and a hub-spoke architecture (us-west-1 as primary hub) that was driving unnecessary data transfer costs.

It also discovered we were running ~100 EKS clusters across four US regions (us-west-1, us-east-1, us-east-2, us-west-2), each carrying a baseline cost, at minimum a few worker nodes, load balancers, and networking overhead. The question wasn't whether individual clusters were expensive — they weren't. The question was whether the aggregate footprint was justified, especially for staging environments that may not need dedicated clusters.

The Cache Cluster That Grew in the Dark

Deductive's next finding was the most dramatic. The ElastiCache infrastructure had undergone a 155% cost increase from September to December, growing from a modest footprint to ~150 cache.r5.large nodes spread across four regions:

Here's where Deductive's cross-system correlation became invaluable. It correlated the traffic pattern to ElastiCache with DynamoDB and Aurora usage, leveraging its understanding of the system architecture and using Aurora Serverless v2 and DynamoDB usage as the baseline.

The conclusion was unavoidable: our customers were incorrectly scaling their ElastiCache cluster to protect an infrastructure which is low and predictable. It was like hiring 10 security guards for a lemonade stand.

The IaC Cross-Reference

This is where Deductive's ability to correlate infrastructure-as-code with actual cloud spend proved most valuable. Deductive used the knowledge from the IaC (infrastructure-as-code) repository, containing code related to pulumi and terraform and asked it to compare what was provisioned versus what was consumed.

The IaC-to-spend correlation revealed that our cost optimizations for individual resources were excellent. Spot instances, serverless databases, lifecycle policies — all doing exactly what they should.

The cost inefficiency wasn't at the resource level. It was at the architecture level: too many regions, incorrect caching/cache-scaling strategy, centralized ECS registries with cross-region pulls, and no cost allocation tags to track spend per customer. No amount of instance right-sizing would fix structural costs.

Why This Matters: The Case for AI-Driven Cloud Forensics

The Old Way Doesn't Scale

Traditional cloud cost analysis follows a predictable pattern: export a CSV from Cost Explorer, open it in a spreadsheet, sort by cost, squint at the numbers, and maybe catch the obvious stuff. This approach has three fatal flaws:

- It's siloed. Cost data lives in billing. Utilization data lives in CloudWatch. Kubernetes metrics live in Prometheus. Cross-referencing them manually is tedious and error-prone.

- It's backward-looking. By the time you notice a cost spike in your monthly review, you've already been paying it for weeks.

- It misses correlations. A human looking at ECR costs won't instinctively check which regions the Kubernetes clusters are deployed in. Deductive did.

What Makes AI Different

In our 10-minute investigation, Deductive performed analysis that would have taken a human team days:

The key insight isn't that AI is faster (though it is). It's that AI reasons across data boundaries that humans naturally silo. The connection between ECR region, cluster location, and data transfer costs exists in three different AWS services. Deductive found it because it doesn't think in service silos, it thinks in systems.

The Hidden Cost of Not Looking

Our customers’ monthly expenses aren't outrageous by any standard. But the 155% cost volatility between months tells a story of infrastructure that's reacting rather than planning. The October-December spike wasn't caused by a traffic surge, it was caused by provisioning decisions that nobody reviewed.

Every engineering team has a version of this story. The cache cluster grew because someone scaled up during an incident and never scaled back down, added scaling policy by just looking at request patterns of certain hot shards. The container images shipping across regions because the ECR was set up in a different region than the clusters it serves.

These aren't failures of engineering. They're failures of visibility. And without cost allocation tags, budgets, and multi-region Config, you can't do per-customer unit economics, you can't detect anomalies early, and you can't catch drift. Governance isn't a cost, it's the infrastructure that prevents costs from becoming surprises.

Conclusion

Cloud forensics is a reasoning problem, not a data problem. The data already exists across Cost and Usage Reports, CloudWatch, and your infrastructure definitions. The gap is in correlating it across services, regions, and time to surface what you didn’t know to look for. Deductive did all of this in one session. No scripts. No manual aggregation. No week-long spreadsheet exercises.

The transformation: From "We should probably look at our cloud costs" to "Here's a prioritized optimization plan with specific savings, implementation timelines, and the architectural changes to get started." Every infrastructure has stories hiding in the billing data, cost anomalies that explain themselves when you correlate them with the right metrics, provisioning decisions that made sense once but don't anymore, and architectural patterns that quietly drain budget month after month. The question isn't whether your cloud bill has hidden costs. It's whether you've looked.

Connect Deductive to your infrastructure and find out.

This analysis was performed using Deductive AI against production AWS infrastructure. All cost figures reflect actual resource consumption with credits and refunds excluded.

.png)